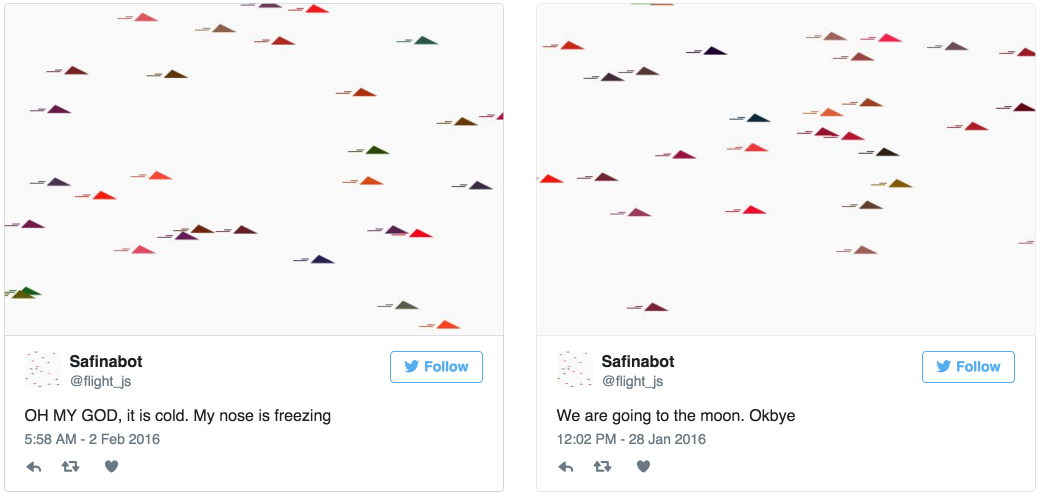

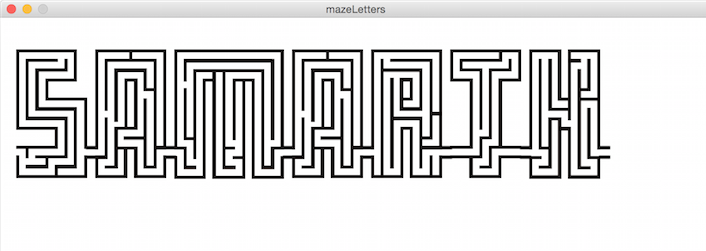

Hi, I am Safinah. I am a graduate student at the Personal Robots Group at MIT Media Lab . My research is about studying how tiny humans create with AI, and how it influences their creativity. I design and study technologies to foster creative learning. I develop child-robot interactions for behavioral learning such curiosity, creativity and growth mindset. I design inclusive creative AI literacy curricula and teaching materials for k-12 students and teachers. I like making art, games, and robots. I teach k-12 students about robots and making games.

I am advised by Professor Cynthia Breazeal. Before MIT, I spent time researching education technology, learning games and accessibility at Carnegie Mellon University, Facebook AI Research and Twitch. I am currently a Microsoft Research Fellow, MIT's Teaching Development Fellow (TDF) and MIT's Diversity, Equity and Inclusion (DEI) fellow.

If you are an educator looking for K-12 AI learning materials, see my Creative AI curriculum collection here!

If you are looking for the Creative Human-AI Interacton Guidelines, find my handy printable Design Guideline cards here!

Say hi; don't be shy. Reach out at safinah@mit.edu

Art pick of the week: Shih-Yen Huang

Research

Journal Papers

Children as creators, thinkers and citizens in an AI-driven future.

Safinah Ali, Daniella DiPaola, Irene Lee, Victor Sindato, Grace Kim, Ryan Blumofe, Cynthia Breazeal

Computers and Education: Artificial Intelligence.

Social Robots as Creativity Eliciting Agents.

Safinah Ali, Nisha Devasia, Hae Won Park, Cynthia Breazeal

Frontiers in Robotics and AI

A social robot’s influence on children’s figural creativity during gameplay.

Safinah Ali, Hae Won Park, Cynthia Breazeal

International Journal of Child-Computer Interaction

AI + ethics curricula for middle school youth: Lessons learned from three project-based curricula.

Randi Williams, Safinah Ali , Nisha Devasia, Daniella DiPaola, Jenna Hong, Stephen P Kaputsos, Brian Jordan, Cynthia Breazeal

International Journal of Artificial Intelligence in Education

Integrating ethics and career futures with technical learning to promote AI literacy for middle school students: An exploratory study.

Helen Zhang, Irene Lee, Safinah Ali, Daniella DiPaola, Yihong Cheng, Cynthia Breazeal

International Journal of Artificial Intelligence in Education

Expressive Cognitive Architecture for a Curious Social Robot.

Maor Rosenberg, Hae Won Park, Rinat Rosenberg-Kima, Safinah Ali, Anastasia K Ostrowski, Cynthia Breazeal, Goren Gordon

ACM Transactions on Interactive Intelligent Systems (TiiS)

Peer-reviewed Conference Papers

A Picture is Worth a Thousand Words: Co-designing Text-to-Image Generation Learning Materials for K-12 with Educators

Safinah Ali, Prerna Ravi, Katherine Moore, Cynthia Breazeal, Hal Abelson

Proceedings of Educational Advances in Artificial Intelligence (EAAI-24)

Constructing Dreams using Generative AI

Safinah Ali, Prerna Ravi, Randi Williams, Daniella DiPaola, Cynthia Breazeal

Proceedings of Educational Advances in Artificial Intelligence (EAAI-24)

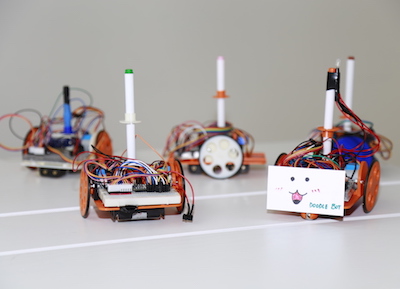

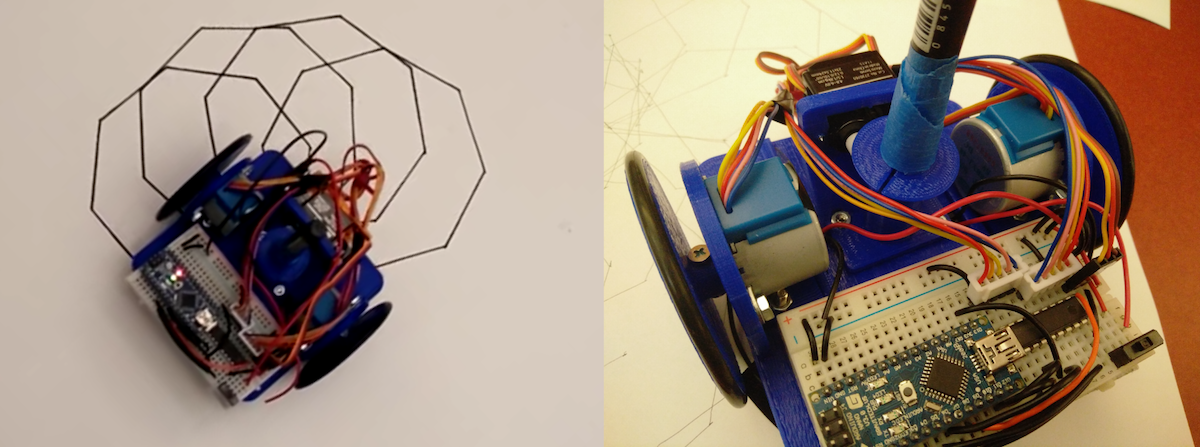

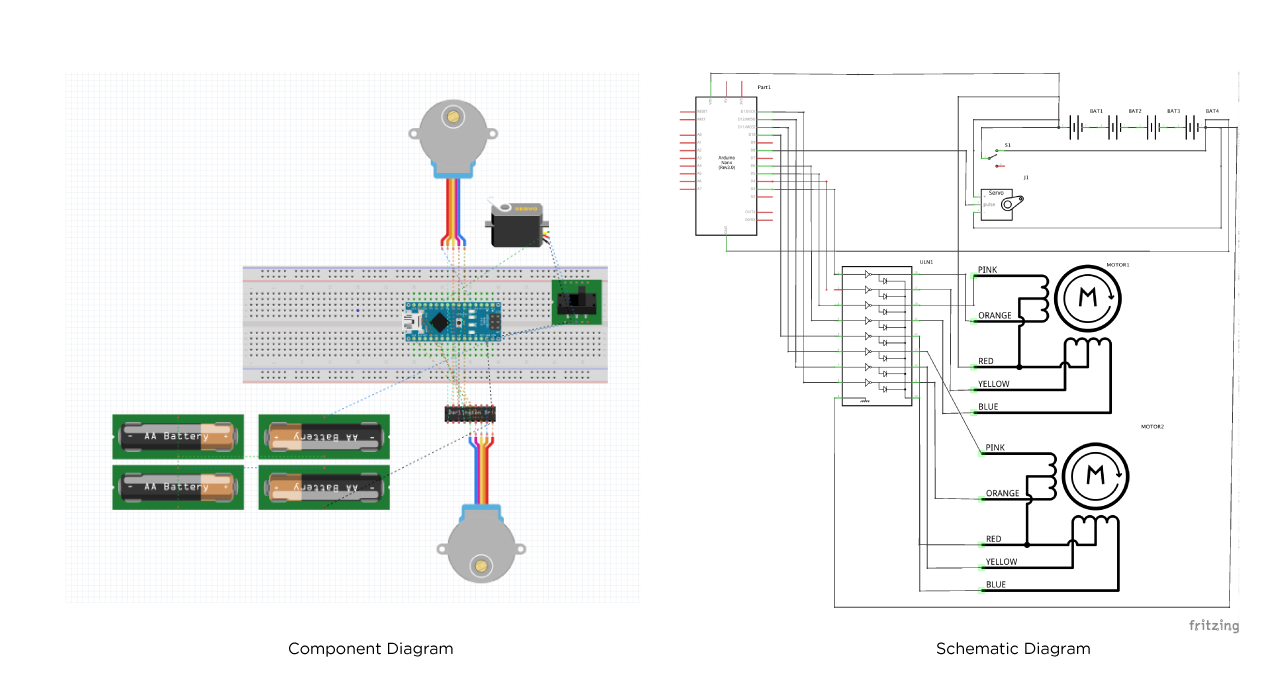

Doodlebot: An educational robot for creativity and AI literacy.

Safinah Ali * , Randi Williams * , Raúl Ancantara, Tasneem Burghleh, Sharifa Alghowinem, Cynthia Breazeal

Proceedings of ACM Human-robot Interaction (HRI) 2024

*equal contribution

AI-Audit. A Card Game to Reflect on Everyday AI Systems.

Safinah Ali, Vishesh Kumar, Cynthia Breazeal

Proceedings of Educational Advances in Artificial Intelligence (EAAI-23)

Make-a-Thon for Middle School AI Educators.

Daniella DiPaola, Katherine S Moore, Safinah Ali, Beatriz Perret, Xiaofei Zhou, Helen Zhang, Irene Lee

Proceedings of the 54th ACM Technical Symposium on Computer Science Education

Making Art with and about Artificial Intelligence: Three Approaches to Teaching AI and AI Ethics to Middle and High School Students.

Benjamin Walsh, Kayla Desportes, Irene Lee, Safinah Ali, Daniella DiPaola, Scott Sieke, Francisco Castro, William Payne, Helen Zhang

Proceedings of the 53rd ACM Technical Symposium on Computer Science Education (SIGCSE)

Introducing Variational Autoencoders to High School Students.

Zhuoyue Lyu, Safinah Ali, Cynthia Breazeal

Proceedings of Educational Advances in Artificial Intelligence (EAAI-22)

Exploring Generative Models with Middle School Students.

Safinah Ali Daniella DiPaola, Irene Lee, Jenna Hong, Cynthia Breazeal

Proceedings of the ACM Conference on Human Factors in Computing Systems (CHI 2020)

Escape! bot: Social robots as creative problem-solving partners.

Safinah Ali, Nisha Devasia, Cynthia Breazeal

Proceedings of ACM Creativity and Cognition (ACM C&C 2021)

Can Children Emulate a Robotic Non-Player Character's Figural Creativity?

Safinah Ali , Hae Won Park, Cynthia Breazeal

Proceedings of the Annual Symposium on Computer-Human Interaction in Play (CHI Play 2020) <

Developing Middle School Students’ AI Literacy.

Irene Lee, Safinah Ali, Helen Zhang, Daniella DiPaola, Cynthia Breazeal

Proceedings of the 52nd ACM technical symposium on computer science education (SIGCSE)

What are GANs?: Introducing generative adversarial networks to middle school students.

Safinah Ali, Daniella DiPaola, Cynthia Breazeal

Proceedings of Educational Advances in Artificial Intelligence (EAAI-21)

The Contour to Classification Game: An Introduction to Neural Networks.

Irene Lee, Safinah Ali

Proceedings of Educational Advances in Artificial Intelligence (EAAI-21)

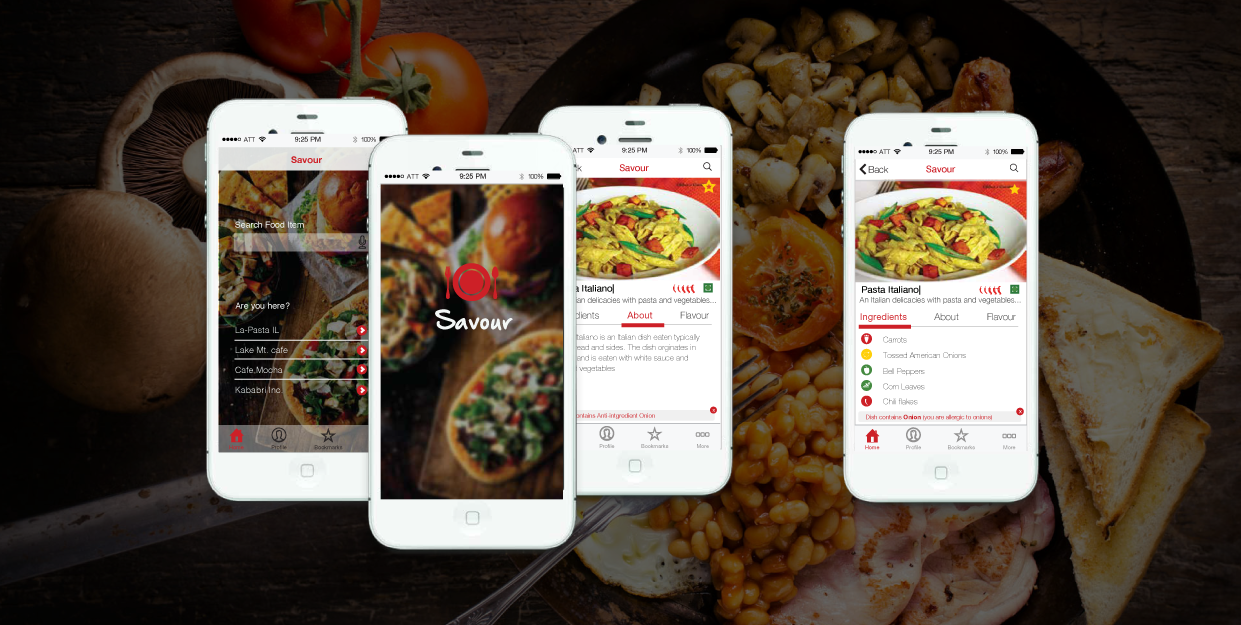

Sonify: Making Visual Graphs Accessible.

Safinah Ali, Laya Muralidharan, Felicia Alfieri, Monali Agrawal, Jacob Jorgensen

Proceedings of the 1st International Conference on Human Interaction and Emerging Technologies (IHIET 2019)

Constructionism, Ethics, and Creativity: Developing Primary and Middle School Artificial Intelligence.

Safinah Ali, Blakeley H Payne, Randi Williams, Hae Won Park, Cynthia Breazeal

Proceedings of International Joint Conferences on Artificial Intelligence 2019 (IJCAI 2019)

Can Children Learn Creativity from a Social Robot?

Safinah Ali, Tyler Moroso, Cynthia Breazeal

Proceedings of ACM Creativity and Cognition (ACM C&C 2019)

A Good Scare: Leveraging Game Theming and Narrative to Impact Player Experience.

Jarrek R Holmes, Alexandra To, Fengyi Zhang, Se Eun Park, Safinah Ali, Zhen Bai, Geoff Kaufman, Jessica Hammer

Extended Abstracts of the 2019 CHI Conference on Human Factors in Computing Systems (CHI 2019)

A Social Robot System for Modeling Children's Word Pronunciation: Socially Interactive Agents Track.

Samuel Spaulding, Huili Chen, Safinah Ali, Michael Kulinski, Cynthia Breazeal

Proceedings of the 17th International Conference on Autonomous Agents and MultiAgent Systems (AAMAS 2019)

Transition from Game Driven Goal Delineation to Goal Driven Game Design in Tandem Transformational Game Design.

Safinah Ali, Alexandra To, Elaine Fath, Zhen Bai, Jessica Hammer, Geoff Kaufman

Proceedings of the International Academic Conference on Meaningful Play 2018.

Analytic Frameworks for Audience Participation Games and Tools.

Safinah Ali, Rachel Moeller, Judith Choi, Jessica Hammer

Proceedings of Spectating Play 2017

Tandem Transformational Game Design: A Game Design Process Case Study.

Alexandra To, Elaine Fath, Eda Zhang, Safinah Ali, Catherine Kildunne, Anny Fan, Jessica Hammer, Geoff Kaufman

Proceedings of the International Academic Conference on Meaningful Play 2016.

Integrating Curiosity and Uncertainty in Game Design.

Alexandra To, Safinah Ali, Geoff Kaufman, Jessica Hammer

Proceedings of the First International Joint Conference of DiGRA and FDG. (DiGRA 2016)

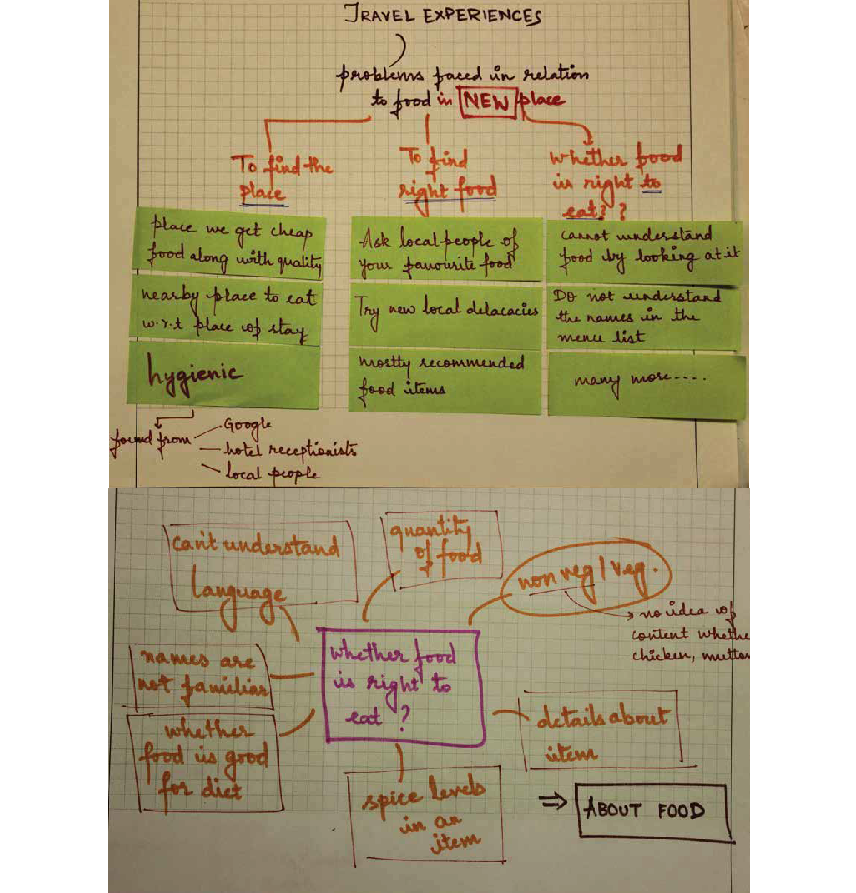

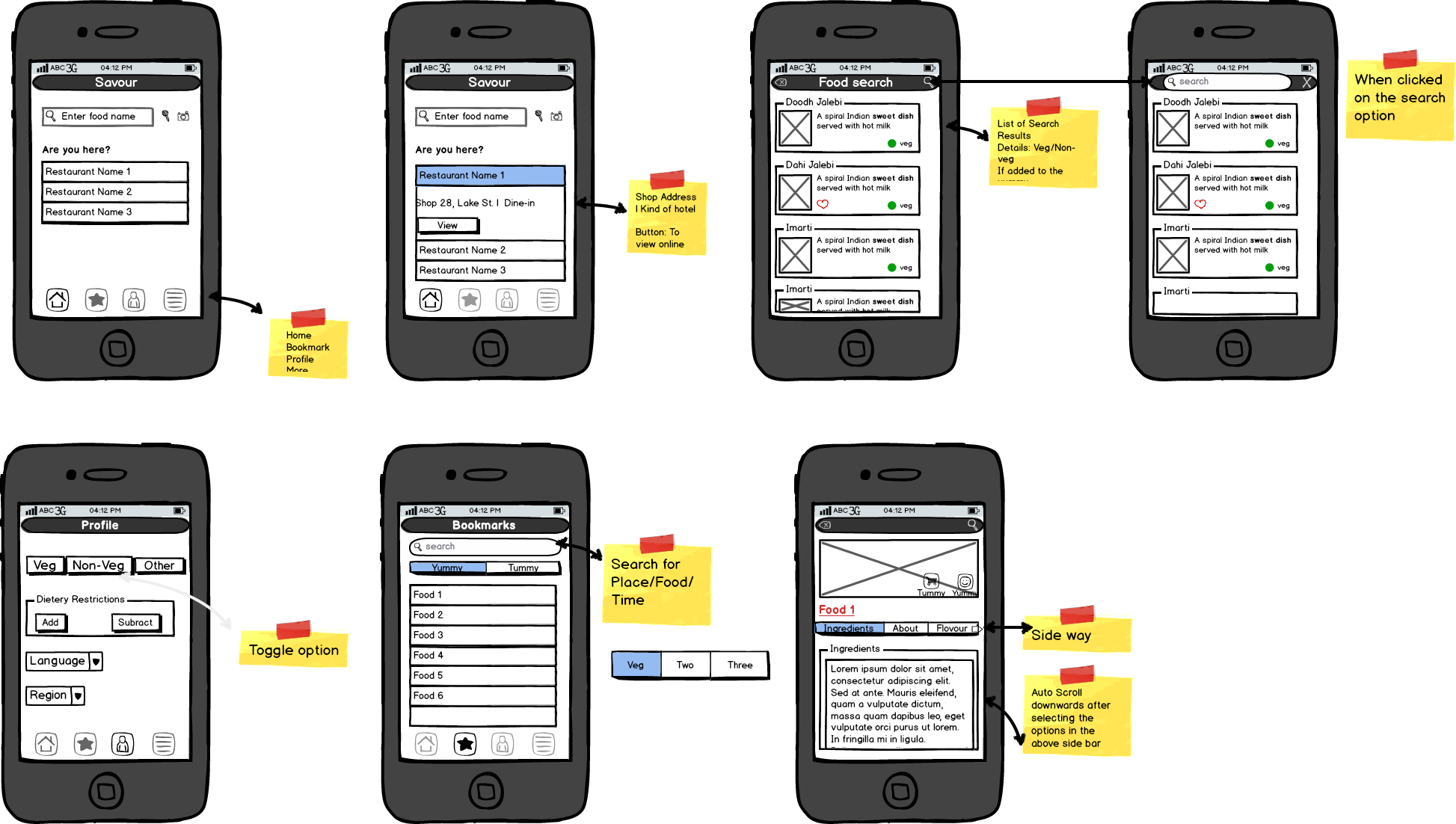

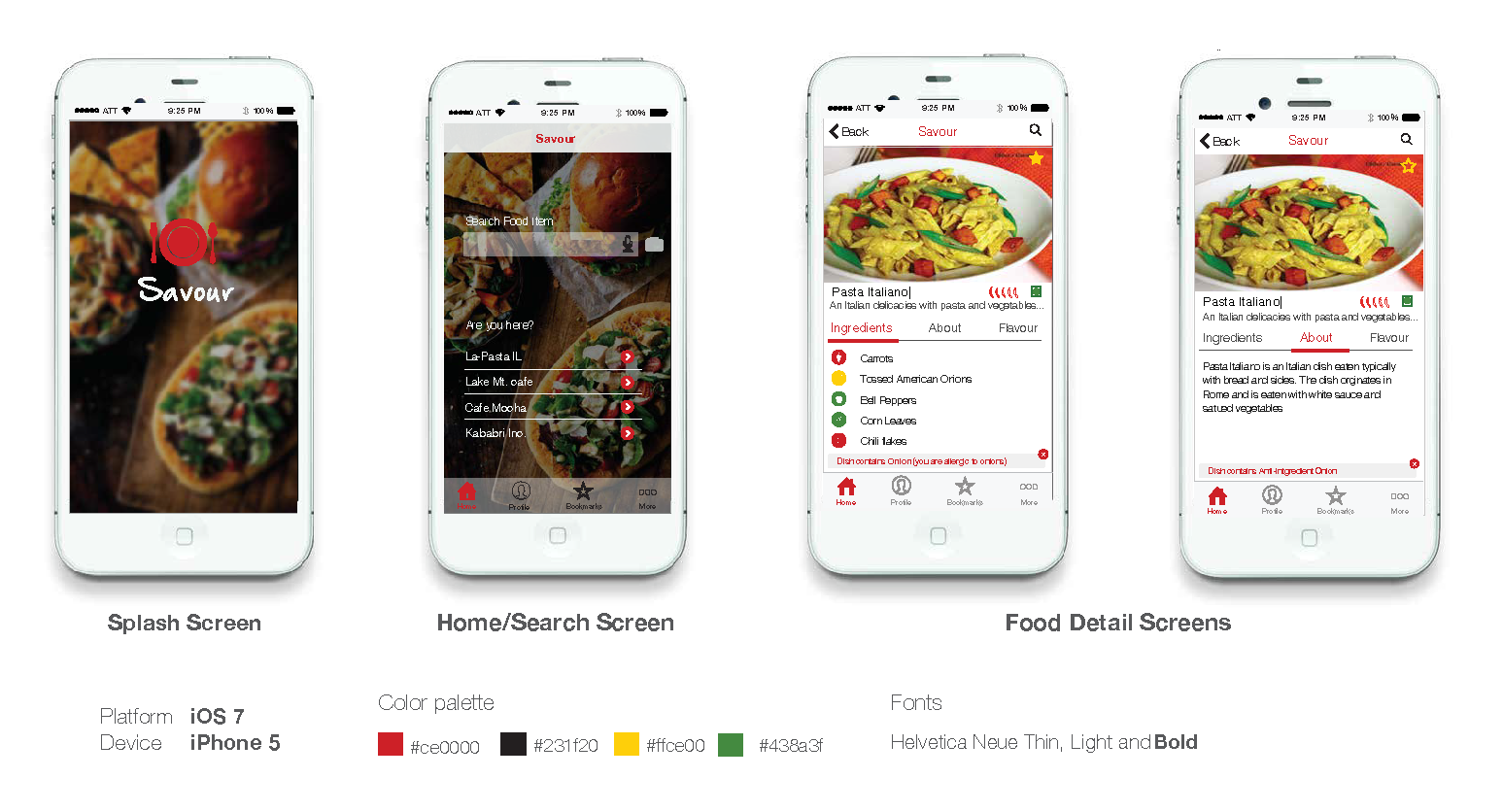

CaptuRing: A Tangible Imaging Tool for Brainstorming.

Bhawna Agarwal, Vikas Goel, Safinah Ali, Neeraj Talukdar, Keyur Sorathia

Proceedings of the India HCI 2014 ACM Conference on Human Computer Interaction (India HCI 2014)

Fun Short Papers

These are my favs. For a full list, refer to Google Scholar

Readying Robots for the Home: The Evolution of Human-Robot Interaction

Safinah Ali, Daniella DiPaola

XRDS: Crossroads, The ACM Magazine for Students

Using Generative AI for Fairness Inquiry

Safinah Ali , Cynthia Breazeal

Teaching Algorithmic Justice - AERA 2024

Making Art with and about Artificial Intelligence: Three Approaches to Teaching AI and AI Ethics to Middle and High School Students

Benjamin Walsh, Safinah Ali , Francisco Castro, Kayla Desportes, Daniella DiPaola, Irene Lee, William Payne, Scott Sieke, Helen Zhang

Proceedings of the 53rd ACM Technical Symposium on Computer Science Education

Telling creative stories using generative visual aids

Safinah Ali , Devi Parikh

Workshop on Machine Learning for Creativity and Design, NeurIPS 2021.

Designing games for enabling co-creation with social agents

Safinah Ali , Nisha Devasia, Cynthia Breazeal

Workshop on Designing Games for and with Children at Interaction Design for Children (IDC) 2021.

Projects

Journey

It has been an adventure!

-

1993-2011

Grew up and schooled, Nagpur

Grew up in a beautiful small town. Learned the art of terrible jokes. Sang my first songs and read my first books. Developed a liking for comics.

-

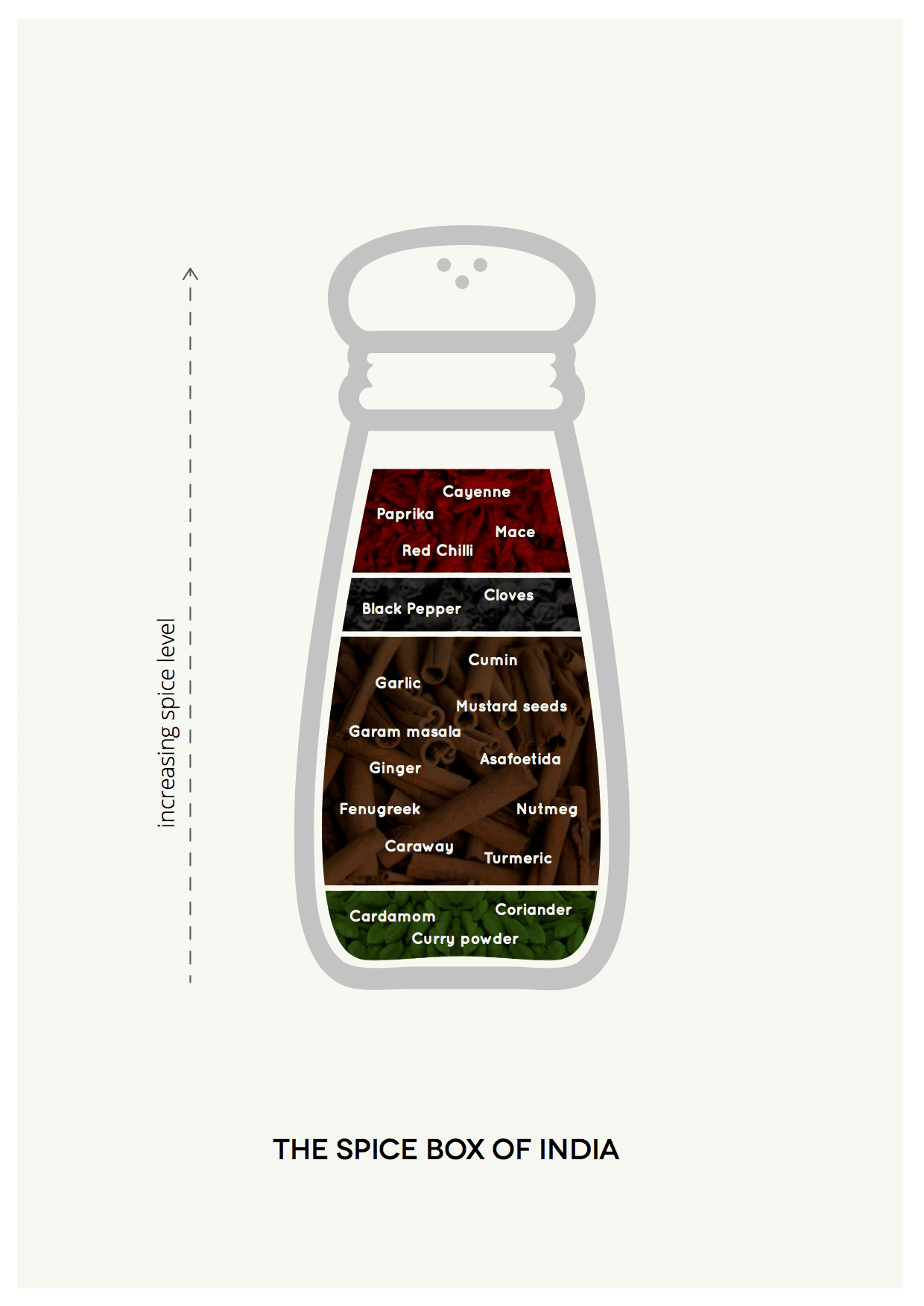

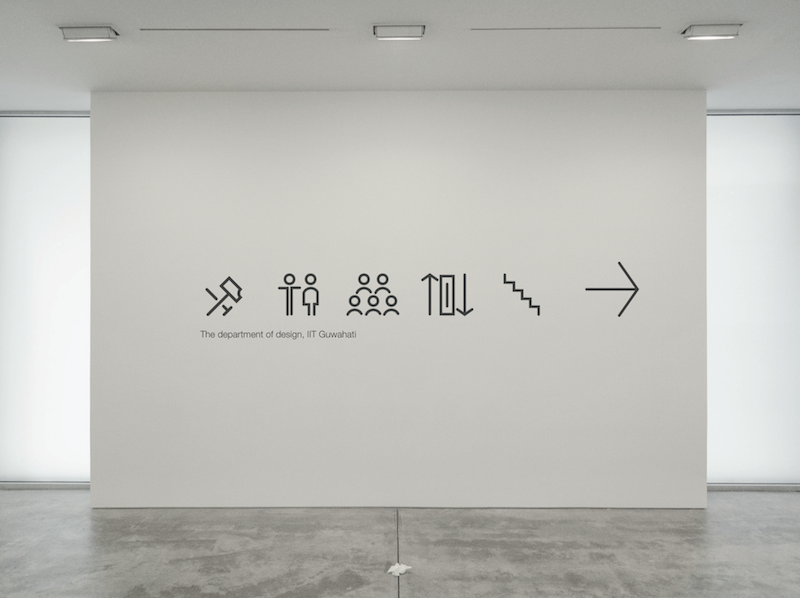

2011-2015

Bachelor's in Design, IIT Guwahati

Learned to design a thing or two. Met the smartest people. Built stuff. Broke stuff. Learned to fix stuff. Designed my first product.

-

Summer 2013

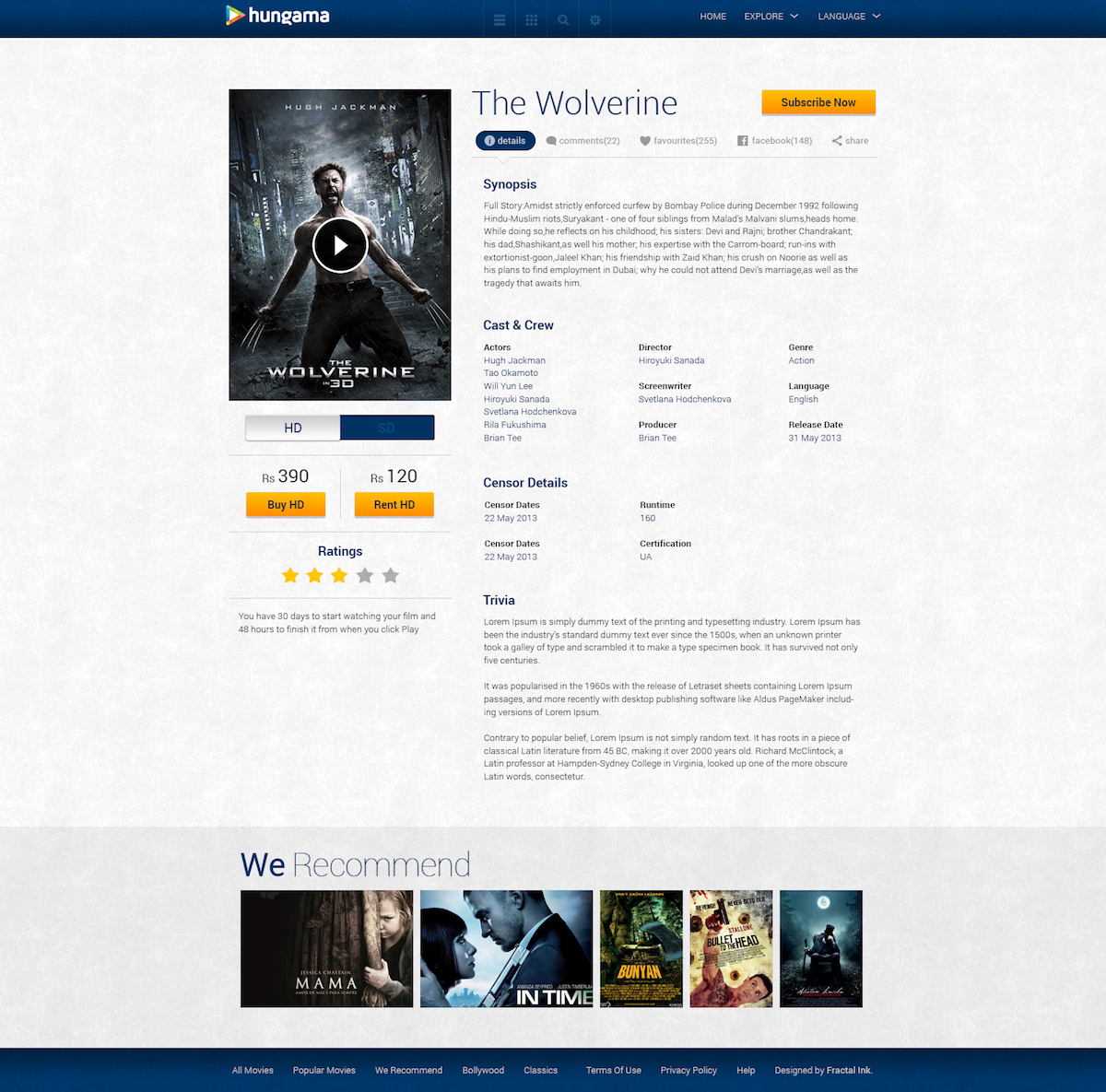

UX Design Intern, Fractal Ink Design Studio

Kept my eyes and ears open. Got my hands dirty trying a range of UX practices. Took ownership of my projects. Worked directly with clients. Was guided by amazing mentors. Designed beautiful interfaces.

-

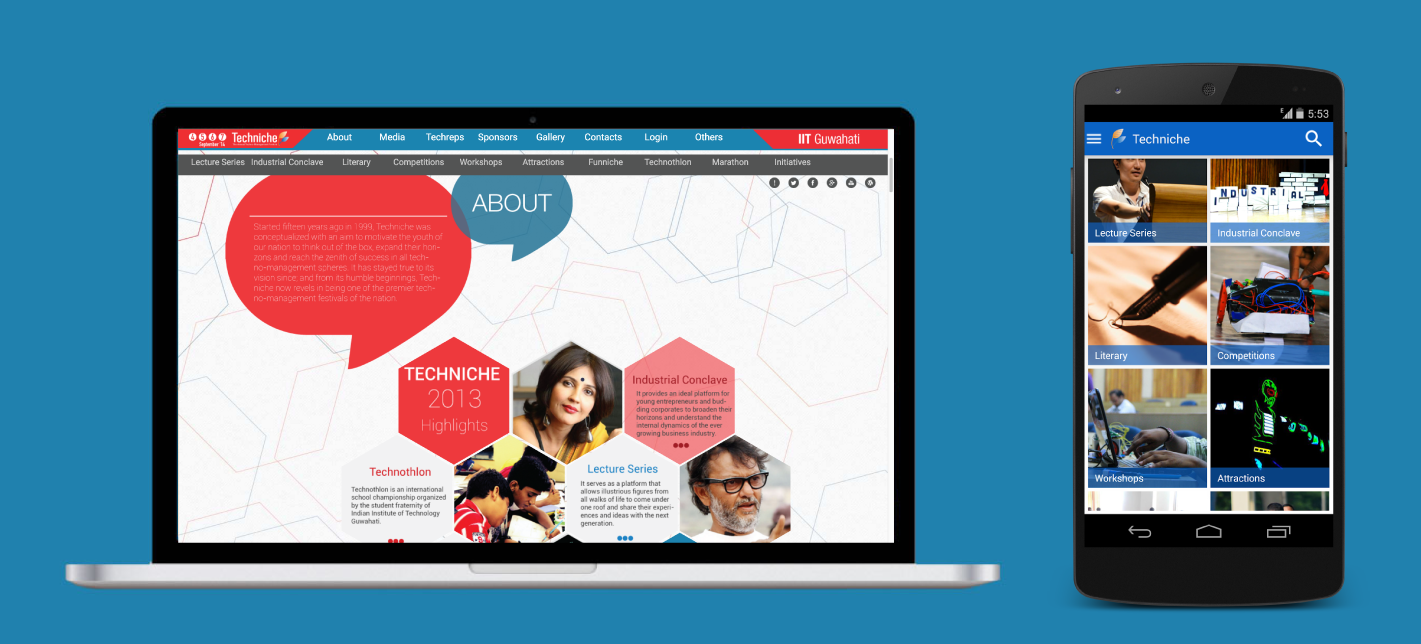

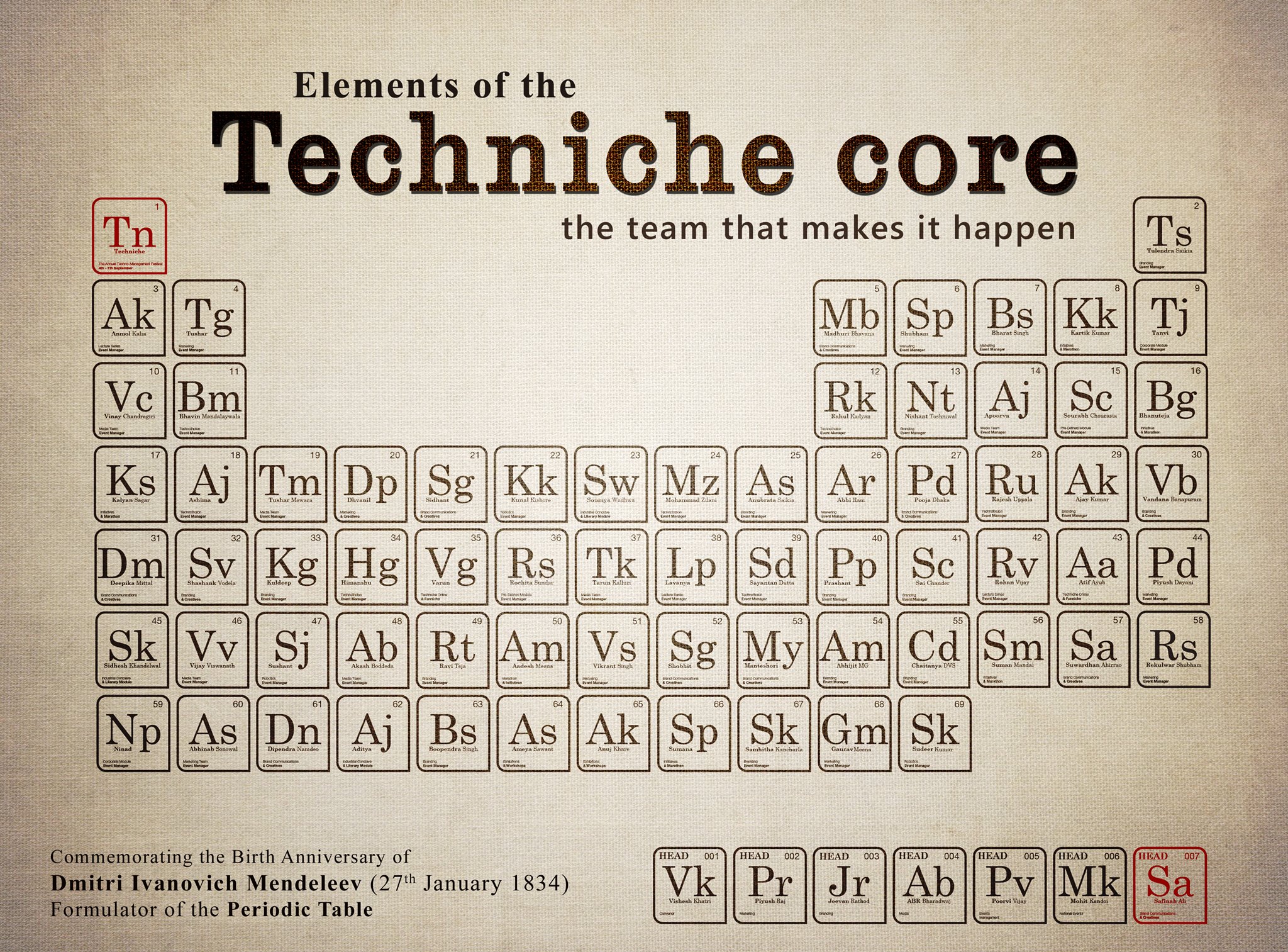

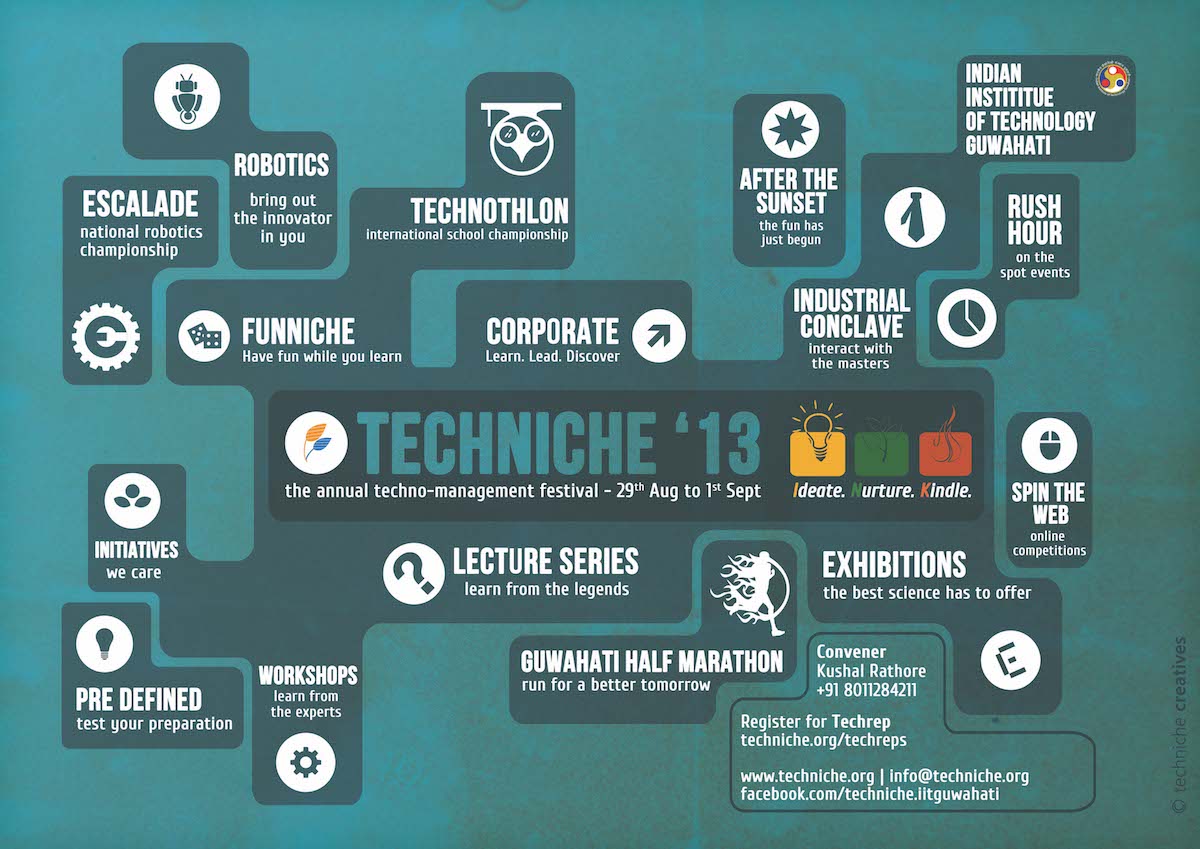

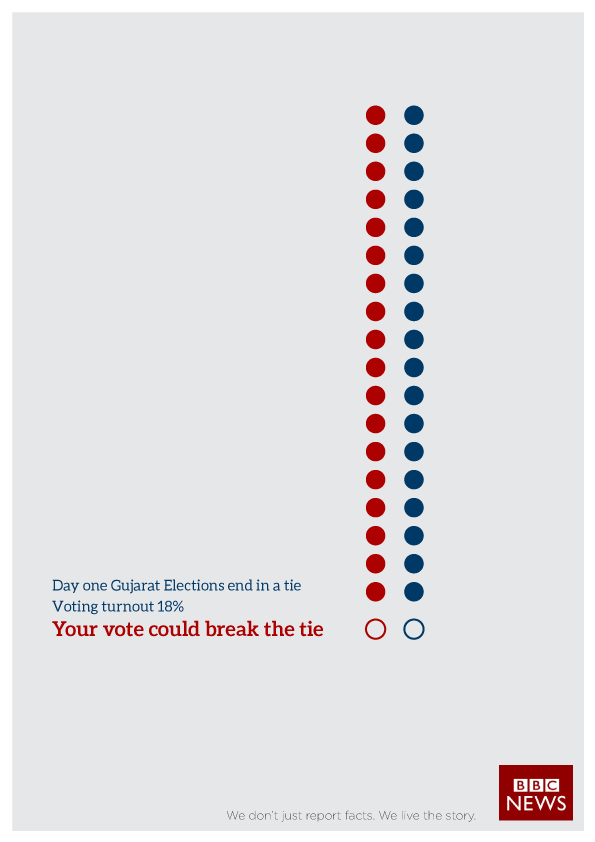

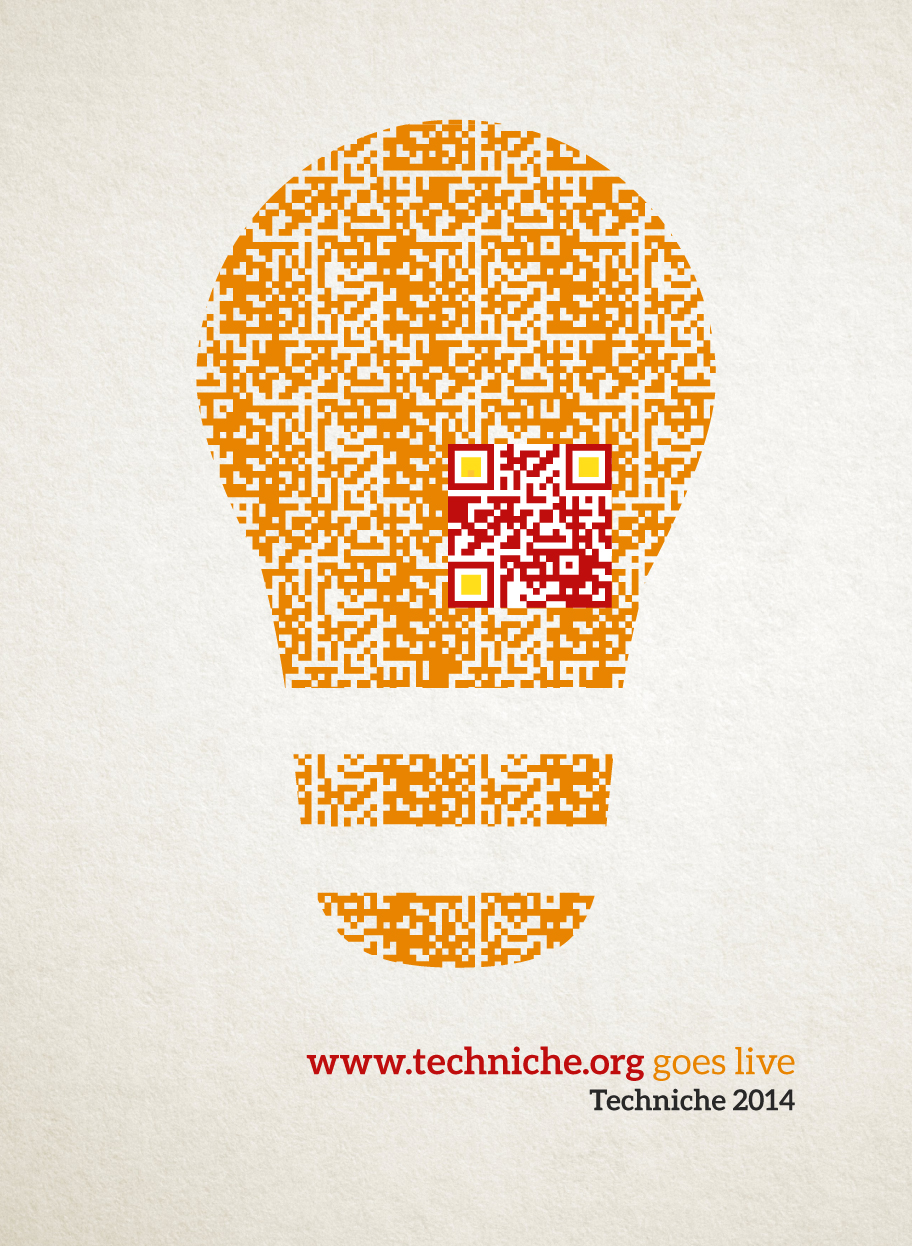

August 2013 - September 2014

Creative Lead, Techniche

Joined Techniche (the annual techno-management festival of IIT Guwahati) as a designer in 2012. Led a team of 12 designers to strategize branding and overall design requirements of the 2014 edition of Techniche.

-

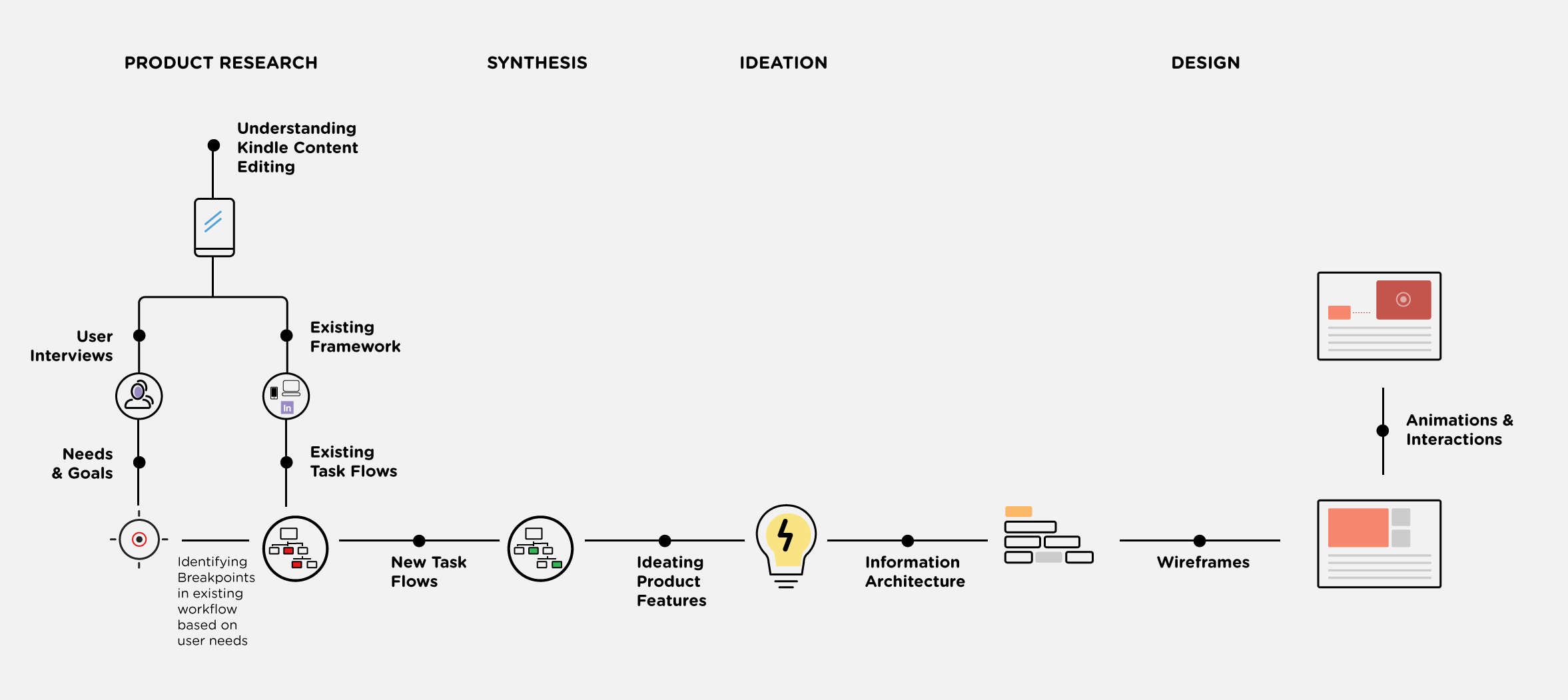

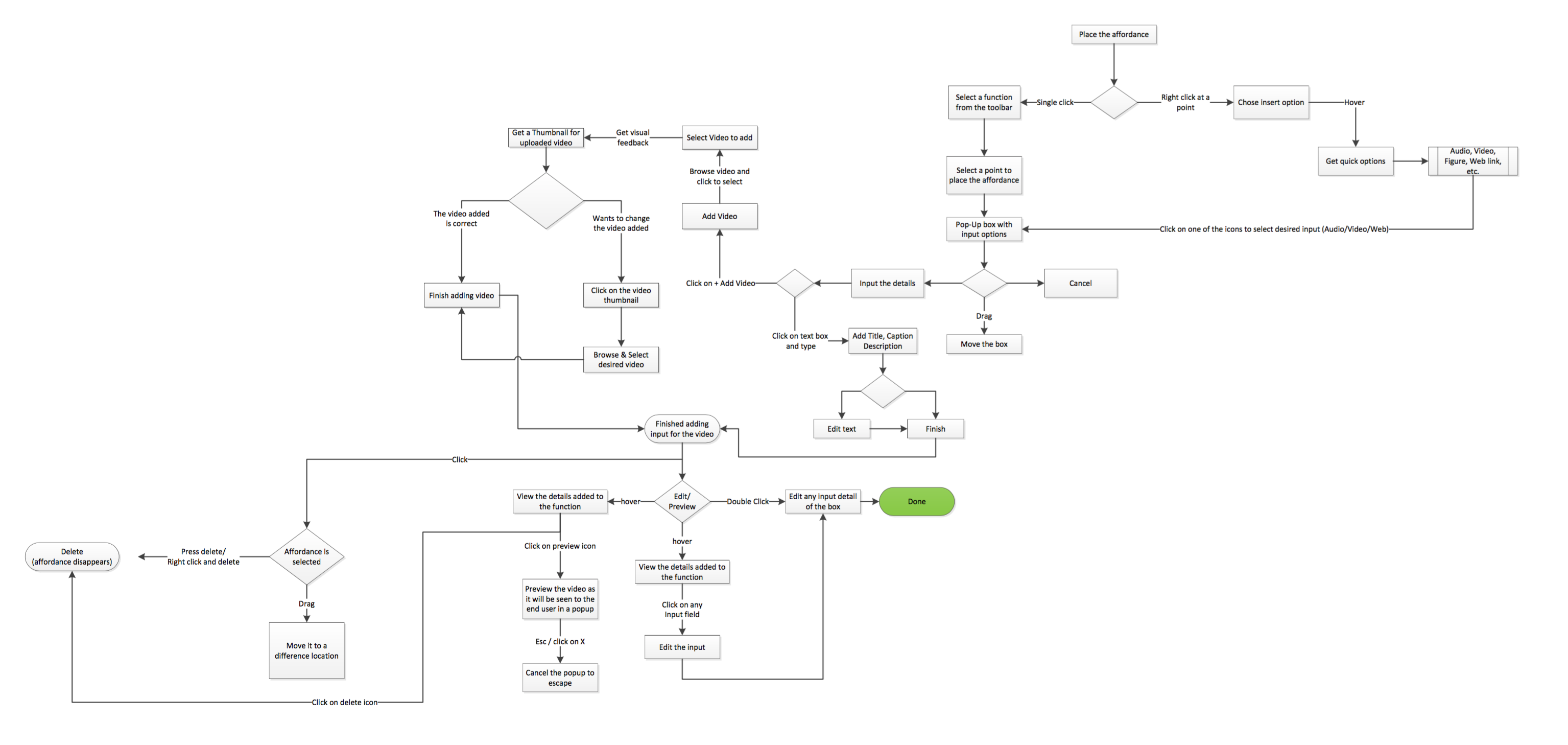

Summer 2014

UX Design Intern, Amazon.com

Practiced User Centered Design. Worked in a diverse team. Learned from the experts. Observed how the corporate giants work.

-

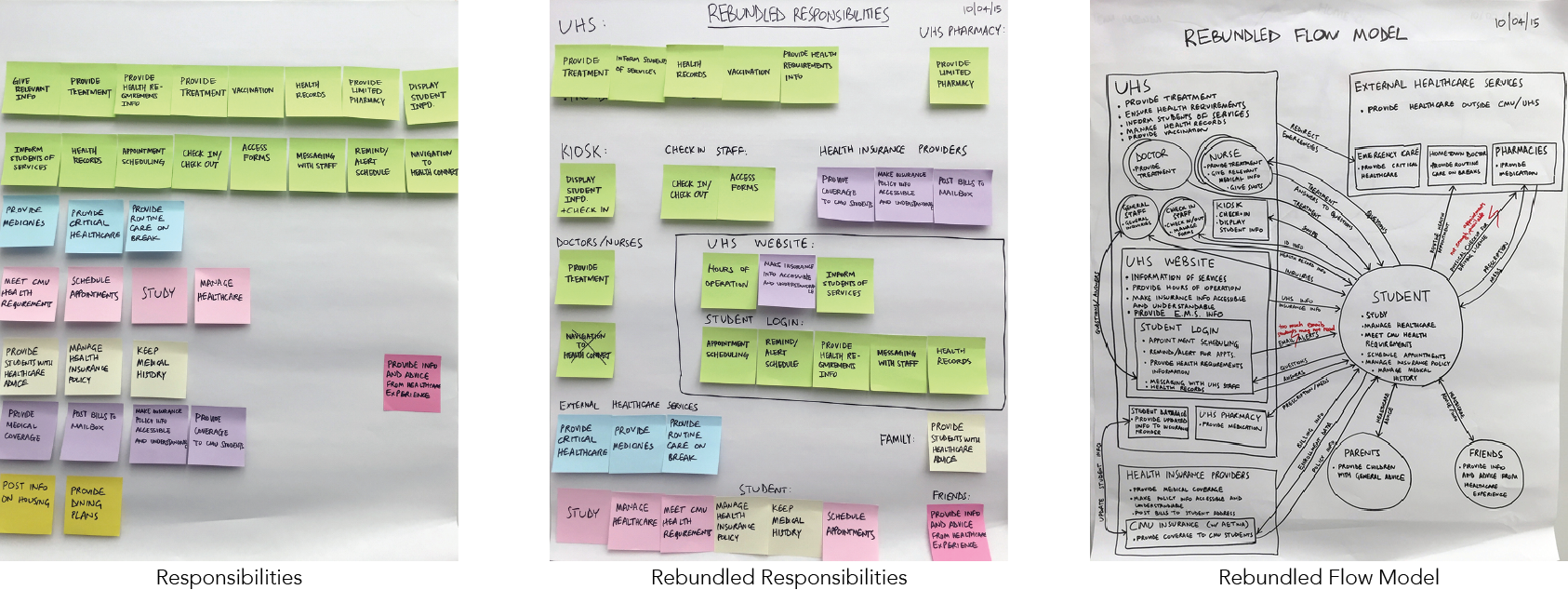

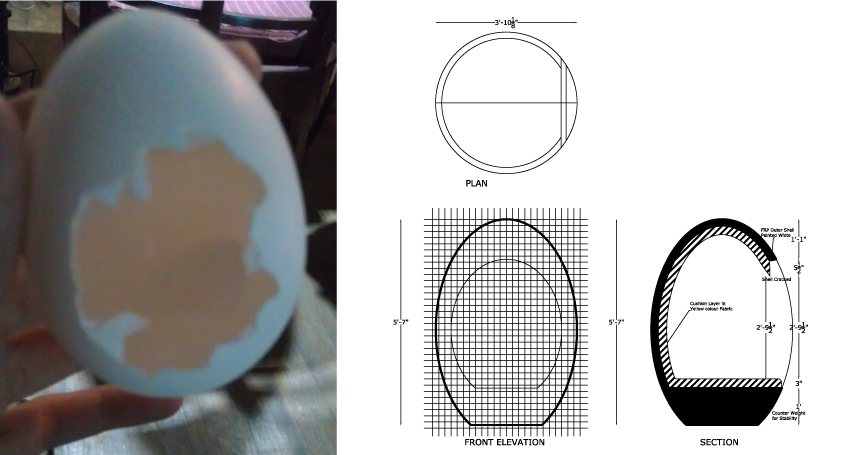

January 2014 - May 2015

HCI Research Student, Embedded Interactions Laboratory

Worked in health and tech. Worked with the users. Built some more stuff. Analyzed data. Wrote my bachelor's thesis.

-

August 2015 - August 2016

Masters in Human Computer Interaction, CMU

Embraced challenges. Acquired a spectrum of skills. Transpired ideas to products. Explored new cultures.

-

August 2015 - February 2016

Interaction Design Education Summit 2016

Worked with the IXDA team to organize the IxD Education Summit. Designed the logo, and handled the website and schedule for the summit.

-

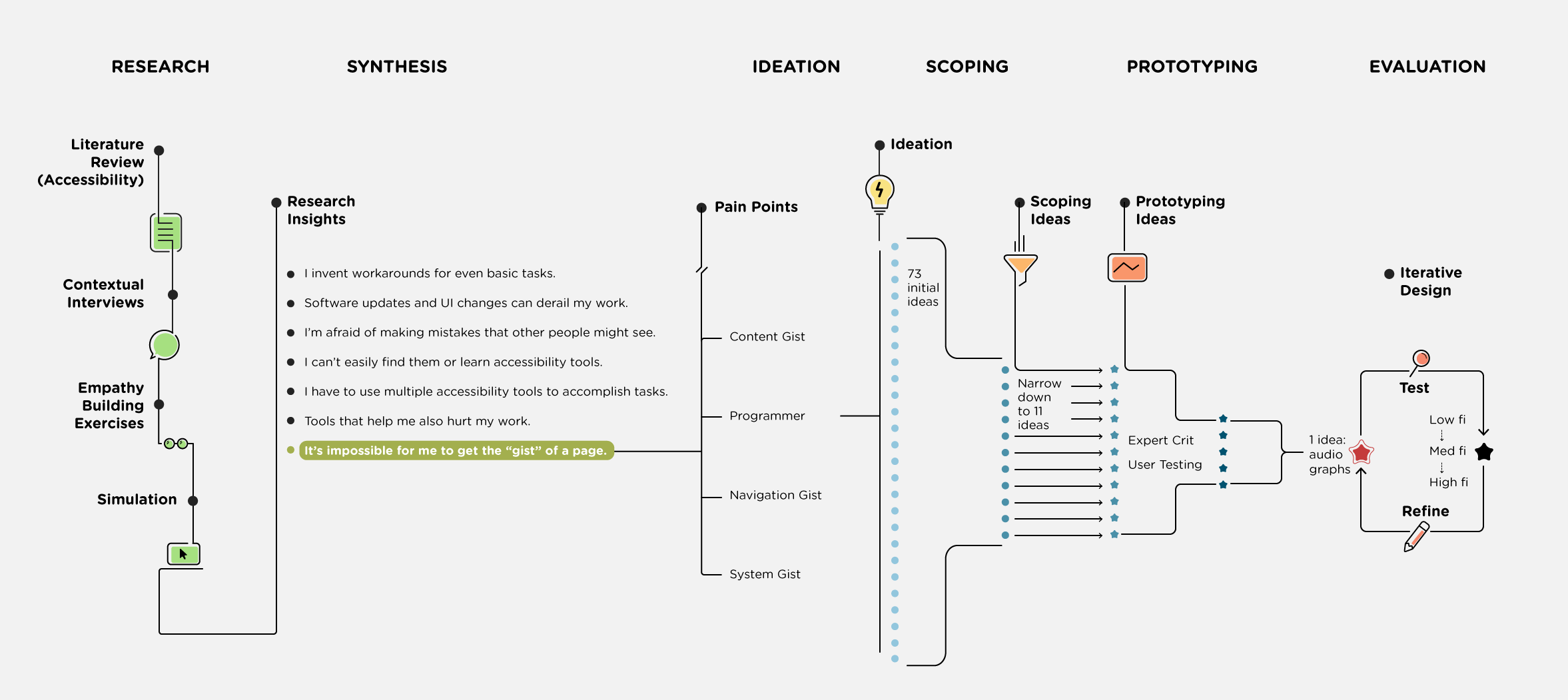

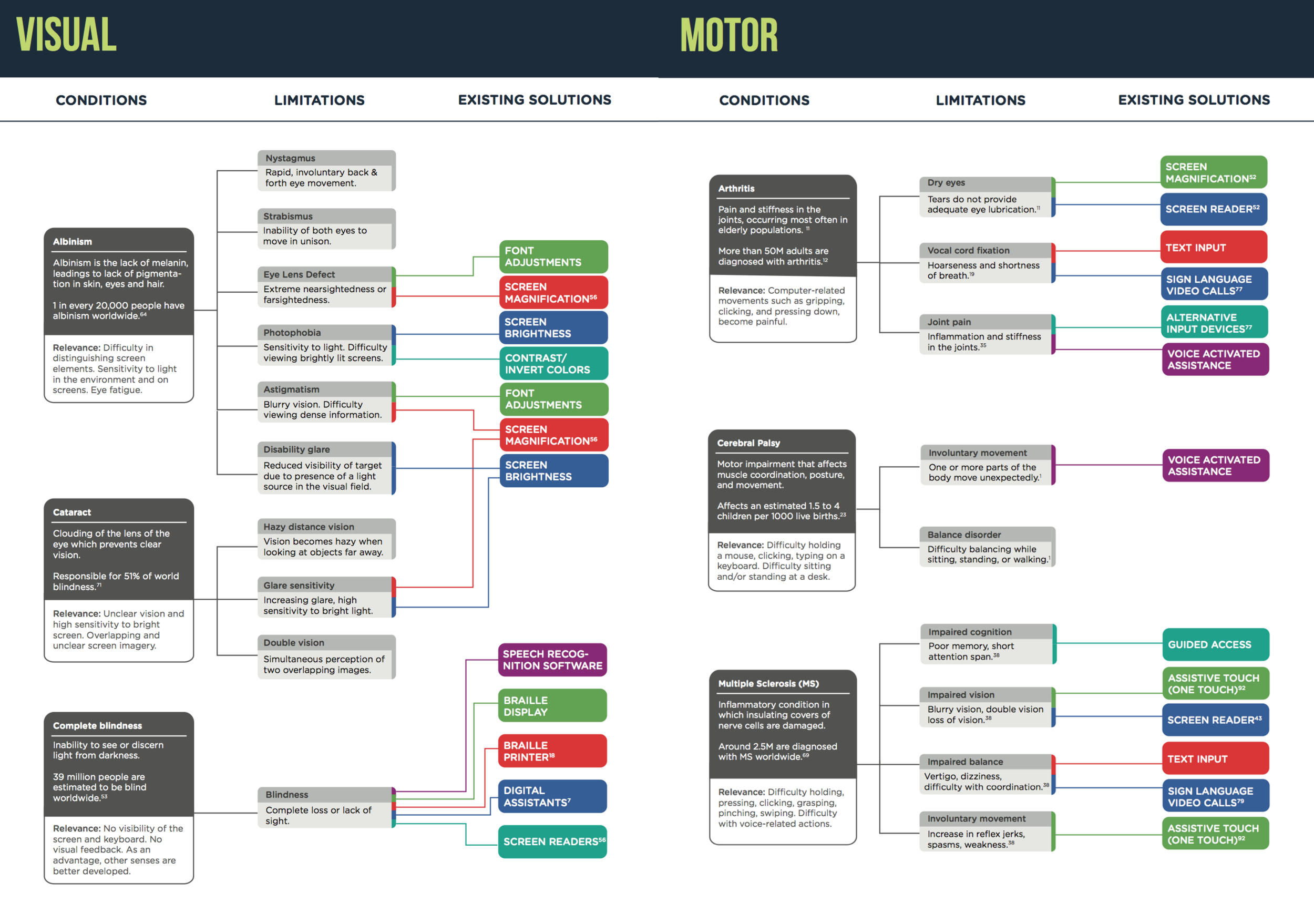

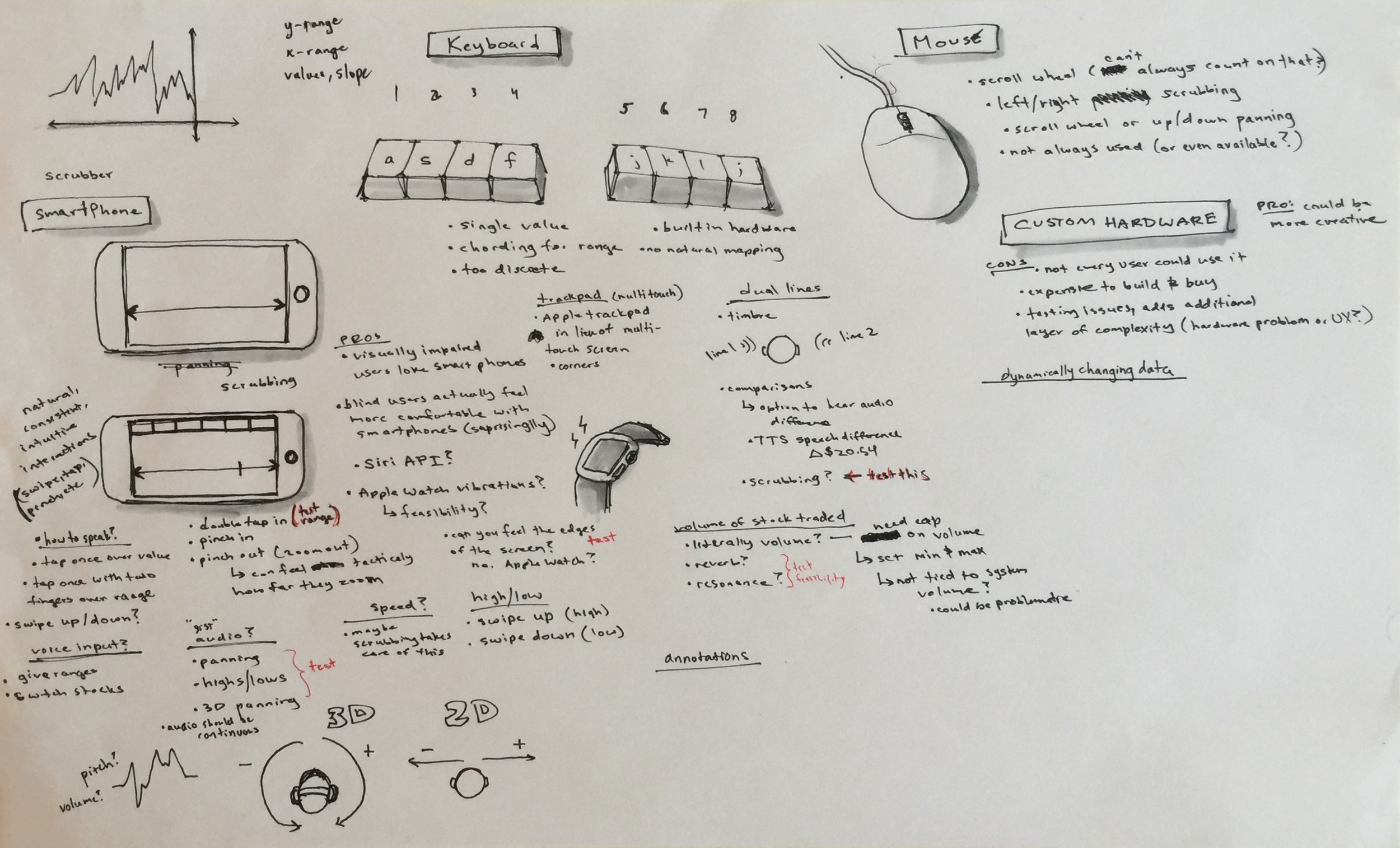

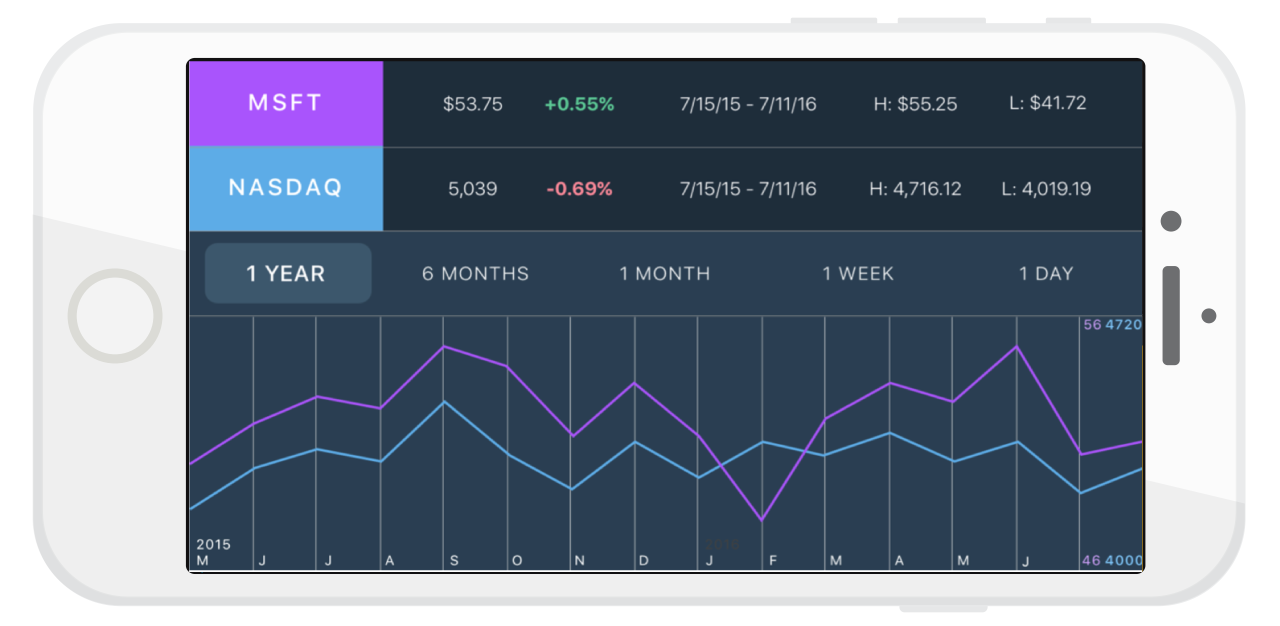

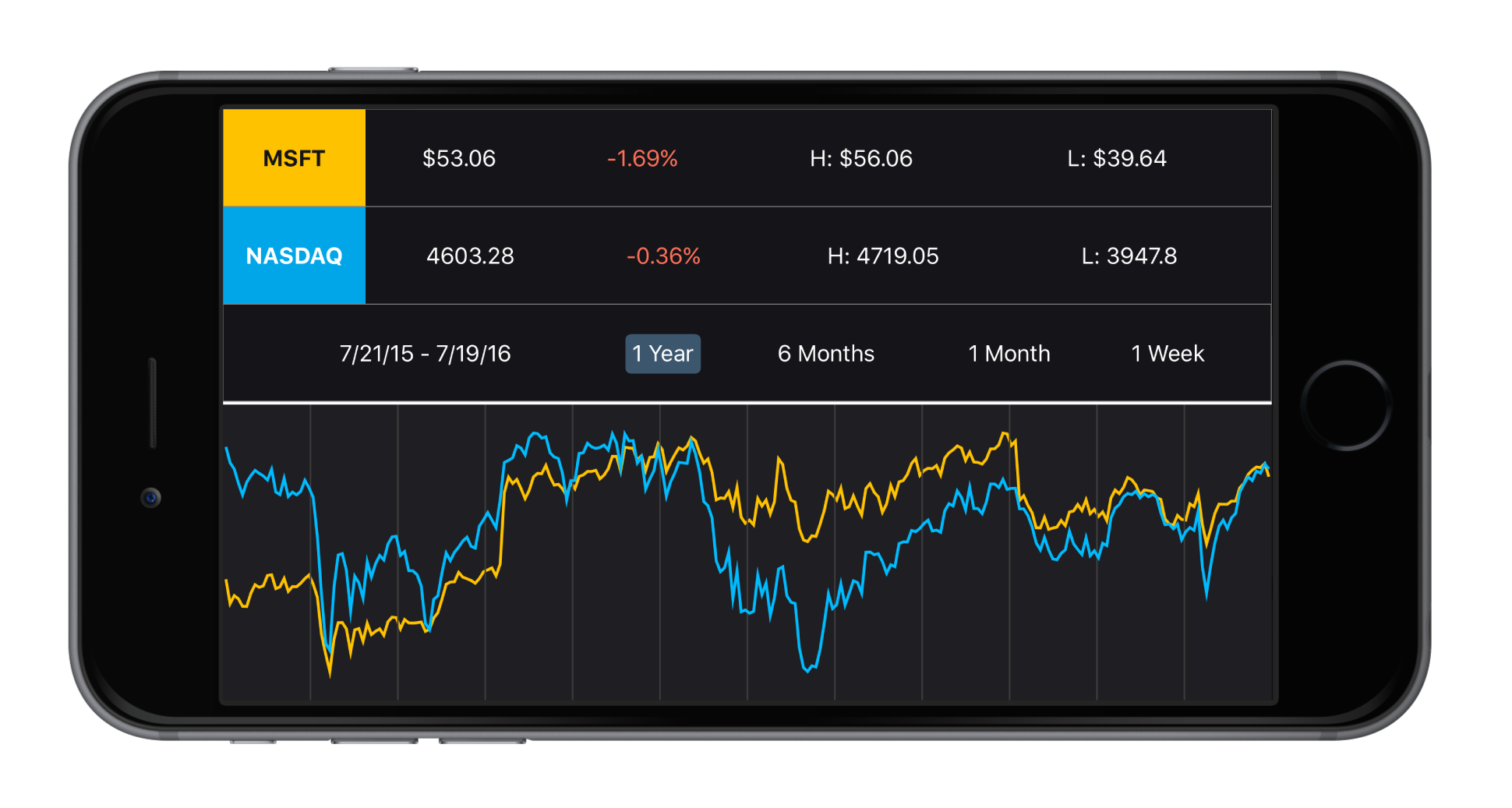

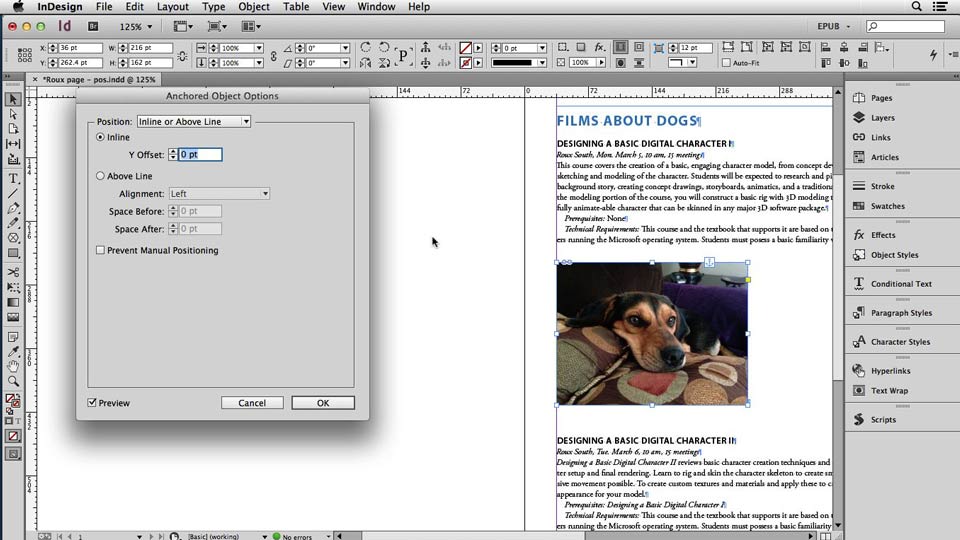

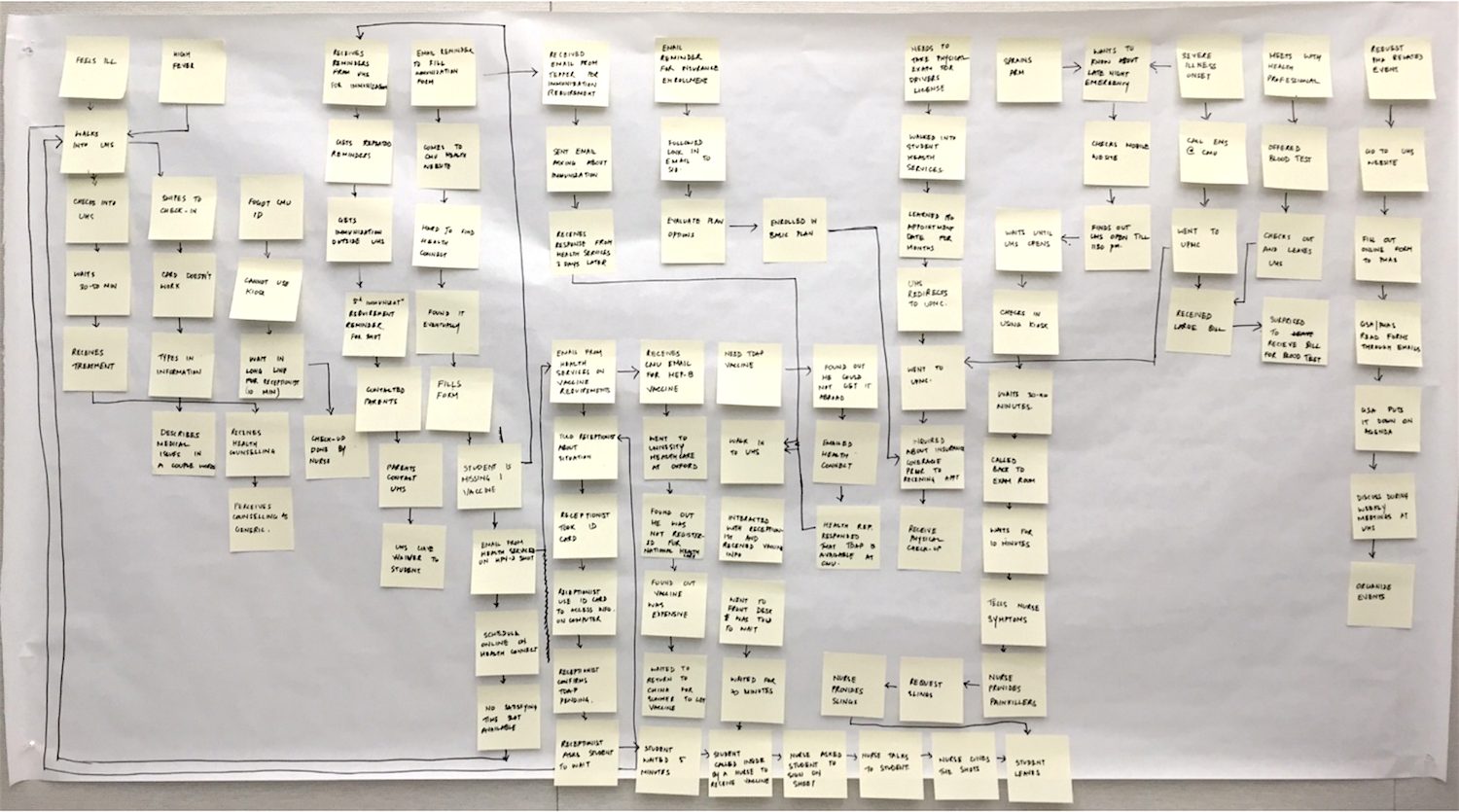

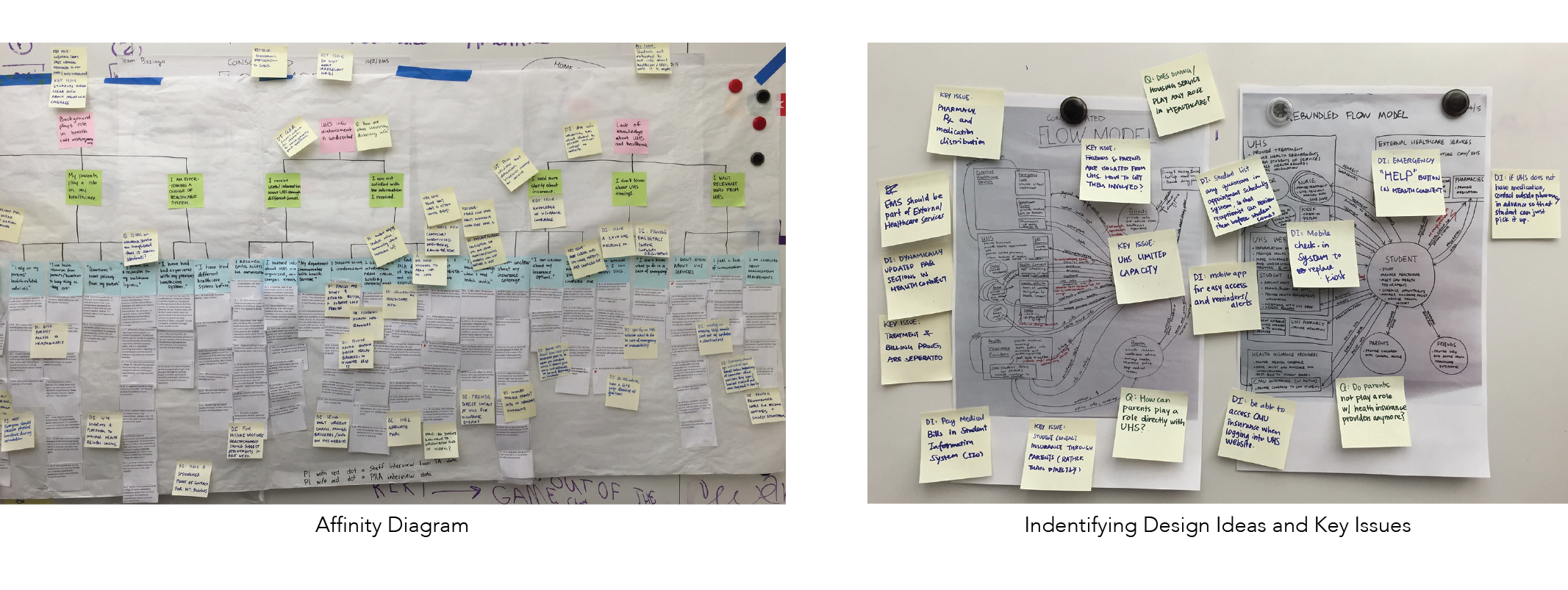

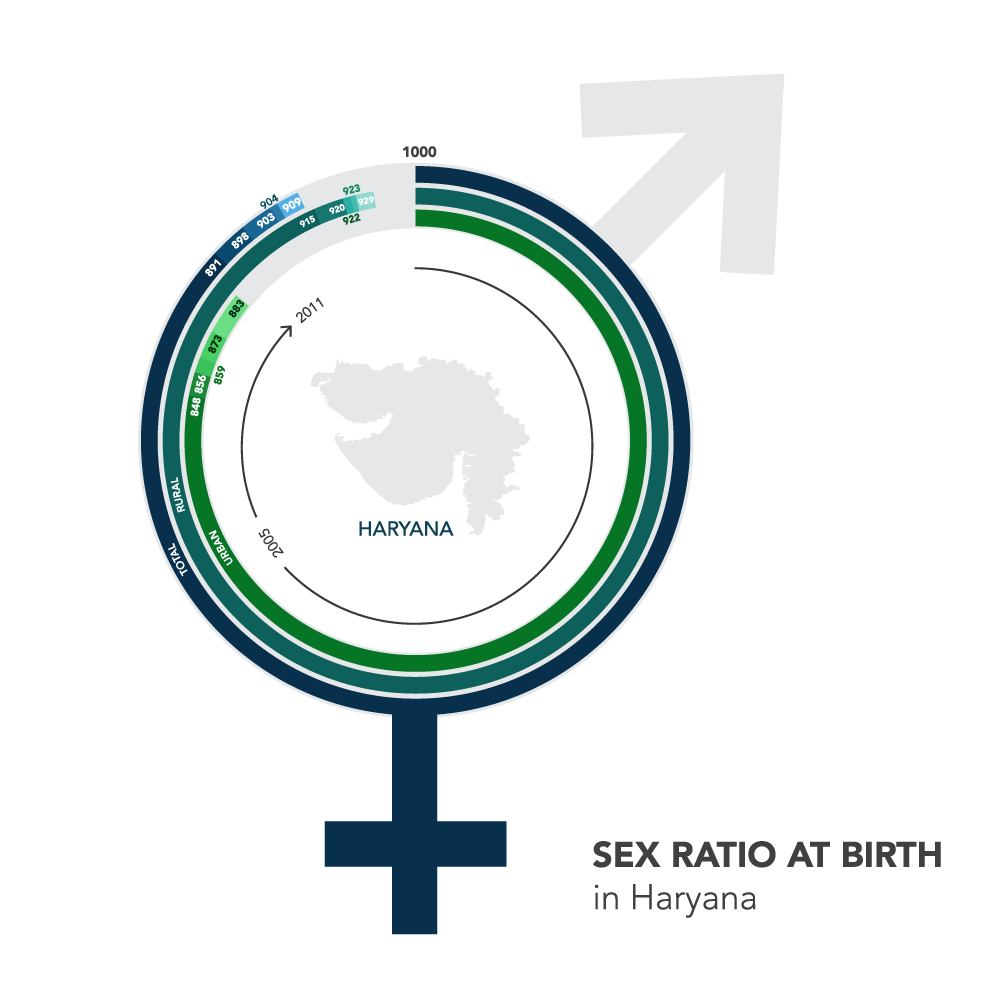

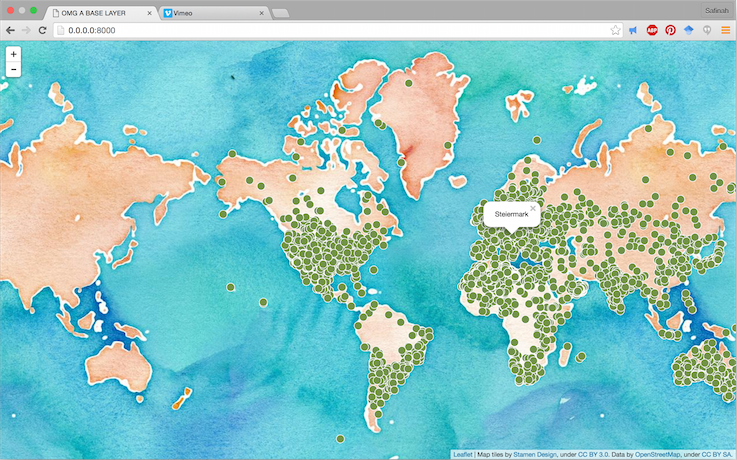

January 2016 - August 2016

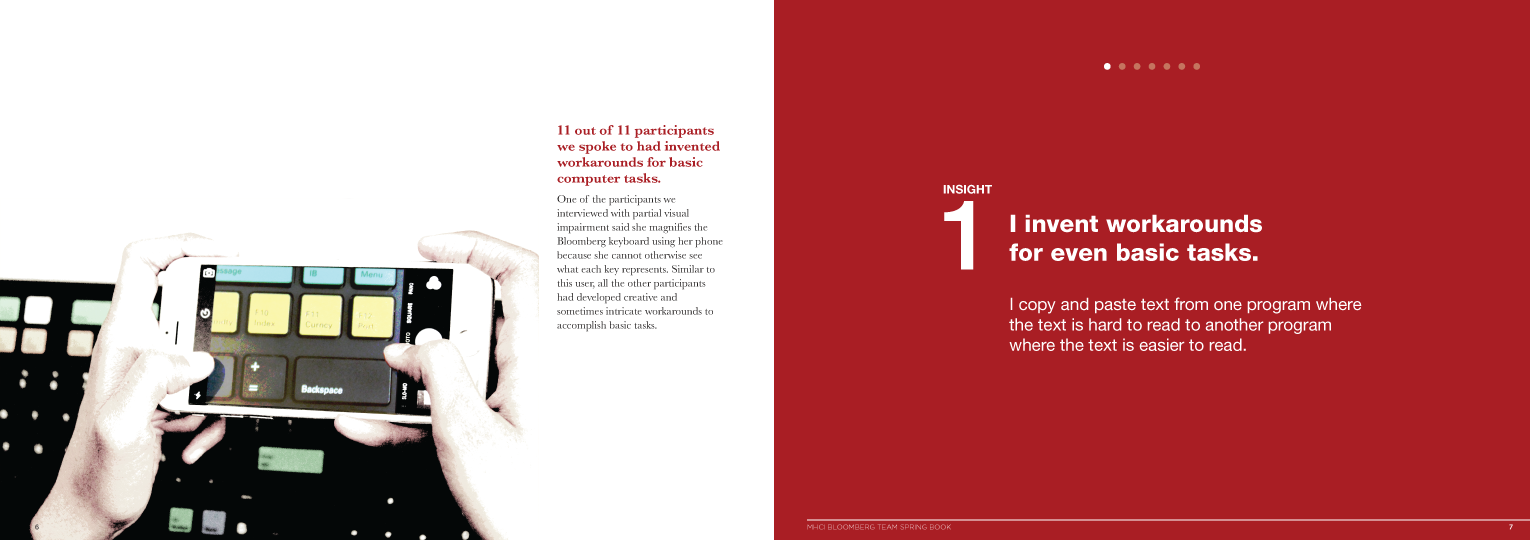

MHCI Capstone Project, Bloomberg

Conducted a research study on accessibility in desktop computers. Designed an app to make stock price charts more accessible.

-

September 2016 - March 2017

Games Research - Twitch

Project management, analyzing user data, running studies, and designing games on Twitch. Working with an amazing team of Game Designers, and Researchers.

-

March 2017 - August 2017

Product Designer, vArmour

User Experience for several vertical products delivering data center and cloud security through micro-segmentation.

-

September 2017 - now

Research Assistant - MIT Media Lab

Human-robot interaction, AI education, Embodied creativity

-

Summer 2021

Research Intern, Facebook AI Research (FAIR)

Designing creative human-AI interactions.

-

More

to

come!